Human Error in Cybersecurity

Human error in cybersecurity is not simply a story about careless users. It is a systems problem shaped by cognition, design, workload, culture, incentives, and organizational structure.

Human Error Is a Systems Problem

Human error in cybersecurity remains one of the most persistent drivers of incidents because digital environments are often built around idealized behavior rather than realistic human behavior. Employees work under time pressure, routine overload, fragmented interfaces, and competing incentives. Under these conditions, mistakes become predictable outcomes rather than isolated failures.

This connects directly with the logic explained in How Cybersecurity Shapes the Modern World, where cybersecurity is presented as a structural layer of modern civilization rather than a narrow technical function.

Cybersecurity Is Not Only a Technical Problem

Networks, code, segmentation, access management, monitoring, and endpoint protection are essential. But every one of those systems still depends on people: users, administrators, analysts, managers, and decision-makers. Every alert must be interpreted, every privilege assigned, every exception approved.

Technology and human behavior are therefore inseparable. A technically mature environment can still remain operationally fragile when people are overloaded, unsupported, or incentivized incorrectly.

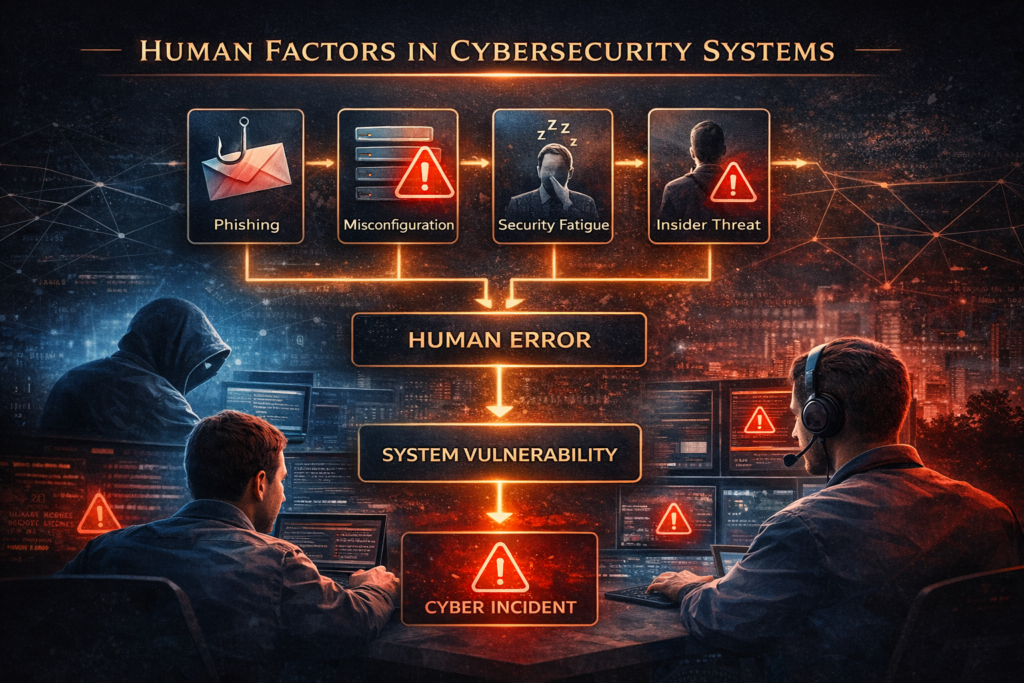

Why Human Error Remains So Powerful

Cognitive overload

Too many alerts, messages, prompts, and verification requests reduce attention quality and increase routine clicking behavior.

Time urgency

Users prioritize immediate tasks and deadlines over abstract security expectations.

Behavioral shortcuts

Password reuse, auto-approval, and warning fatigue emerge from daily workflow friction.

Social assumptions

People naturally trust familiar language, authority signals, and internal communication patterns.

This is why human error in cybersecurity should be analyzed as a predictable systems output rather than a moral failing.

The Myth of the Weakest Link

The phrase “humans are the weakest link” simplifies a complex issue into blame. It ignores design quality, operational burden, documentation, leadership incentives, and workflow realism.

Better framing: humans are not the weakest link. They are embedded actors inside a larger cyber system whose design strongly shapes behavior.

This systems framing aligns with What Is a Complex System? and Feedback Loops in Systems, where repeated outcomes are understood through structures and interactions rather than isolated events.

Phishing and Social Engineering

Phishing attacks are less about code and more about behavioral design. Attackers exploit urgency, authority, familiarity, and routine. They study the rhythms of organizations and imitate internal workflows.

That is why phishing succeeds even in technically strong environments. It targets the meeting point between systems and human cognition.

Misconfiguration and Administrative Error

Some of the most severe incidents come not from end-user clicks but from administrative mistakes: exposed cloud storage, excessive privileges, incomplete logging, delayed patching, or broken backups.

These issues connect strongly to Emergence in Complex Systems, because small local configuration choices can scale into large systemic vulnerabilities.

Security Fatigue and Constant Vigilance

Security fatigue emerges when users are asked to maintain constant vigilance in environments filled with interruptions and friction. Over time, compliance becomes ritual rather than conscious decision-making.

This creates the illusion of secure behavior while actual attention declines.

Culture and Incentives

Organizational culture determines whether secure behavior is operationally viable. If speed is rewarded more than verification, users will skip controls. If reporting suspicious behavior leads to blame, users remain silent.

Cybersecurity therefore depends as much on leadership and culture as on technical tooling.

Systems Thinking: Error as Design Signal

Human error should be treated as a design signal. Instead of asking only who made the mistake, serious analysis asks what made the mistake likely, repeatable, and consequential.

This systems-thinking approach aligns with your broader Darja Rihla cluster and strengthens internal semantic linking for Rank Math and topical authority.

Final Position

Human error in cybersecurity is not a weakness that can be eliminated. It is a permanent design condition of digital systems. The most resilient organizations are not those that expect perfect users, but those that build environments where mistakes are less likely, less damaging, easier to detect, and easier to recover from.