Read the article as structure, not as isolated events

This in-content layer is designed to enhance your existing WordPress article template, not replace it. It gives the page a sharper technical atmosphere, stronger hierarchy, and a more premium analytical rhythm while leaving your theme title, featured image, and article header intact.

Table of Contents

Systems thinking is no longer a niche intellectual framework. In a world shaped by interconnected technologies, fragile infrastructure, geopolitical shocks, and cascading cyber risks, it has become one of the most essential ways to understand reality.

The modern world is not built from isolated events. Economies, digital networks, societies, institutions, and individual decisions continuously influence one another through hidden structures, delayed effects, and feedback loops. What appears simple on the surface is often the visible expression of a much deeper system.

Yet many people are still trained to think in fragments: isolated problems, simple causes, and quick solutions. This mismatch between reality and the way we think is one of the defining challenges of the twenty-first century.

Systems thinking offers a different approach. Instead of looking at parts in isolation, it focuses on the relationships between those parts. It asks not only what is happening, but how things influence each other over time, what patterns repeat, where hidden dependencies exist, and why certain outcomes keep returning even when we think we have solved the problem.

That is exactly why systems thinking matters: it gives us a way to understand complexity without pretending the world is simple.

Complexity is rarely chaos. More often, complexity is structure moving faster than surface-level thinking can follow. Systems thinking helps make that structure visible.

Systems Thinking vs Linear Thinking

Traditional problem-solving often follows a linear model:

Problem → Cause → Solution

This approach works well in simple environments. If a machine stops working, you identify the faulty part and replace it. The cause is clear, the intervention is direct, and the effect is immediate.

But many real-world problems do not behave like machines.

Simple cause, direct fix

- single cause

- short-term intervention

- visible event chain

- limited dependency awareness

Patterns, loops, dependencies

- multiple interacting causes

- feedback loops

- delays and hidden dependencies

- emergent outcomes

Consider climate change, economic crises, cybersecurity threats, energy grid congestion, migration pressure, geopolitical conflict, and supply chain disruption. These issues involve multiple actors, competing incentives, feedback loops, delayed effects, and unpredictable interactions.

A single cause rarely explains the outcome. What looks like one problem is often the result of a structure that has been developing over time.

Linear thinking struggles in these environments because it assumes simplicity where complexity exists. It focuses on visible events rather than the structures that produce those events. That is why many solutions only treat symptoms, while the deeper dynamics remain untouched.

Systems thinking begins with a different assumption: problems are rarely isolated. They are embedded within larger structures.

To understand recurring problems, we must stop asking only what happened and start asking what system made this outcome likely.

How Systems Thinking Explains Complex Systems

A system is a collection of elements that interact with one another to produce a pattern of behavior over time. The parts matter, but the relationships between the parts matter even more.

Examples of systems include ecosystems, financial markets, transportation networks, organizations, digital platforms, national economies, healthcare systems, and energy infrastructure.

Even a city is a system. Infrastructure, governance, culture, technology, law, and human behavior interact continuously. Change one part of that web, and the effects can travel far beyond the original intervention.

The key insight of systems thinking is that the behavior of the whole cannot be understood by examining its parts separately. A system is not just a sum of components. It is a pattern of relationships.

Systems thinking helps us see that relationships generate patterns, and patterns generate outcomes.

Systems thinking shows that small changes in one area can produce large and unexpected consequences elsewhere. In complex systems, outcomes are shaped not only by what exists, but by how everything connects.

That idea matters across nearly every major domain of modern life. It matters in economics, where confidence and policy interact. It matters in technology, where software, users, incentives, and law collide. It matters in history, where institutions outlive leaders. And it matters in culture, where identities are not static facts but evolving social systems.

If you want to build better institutions, understand social change, or navigate technological disruption, you need to see systems rather than fragments.

Systems Thinking, Feedback Loops and Emergence

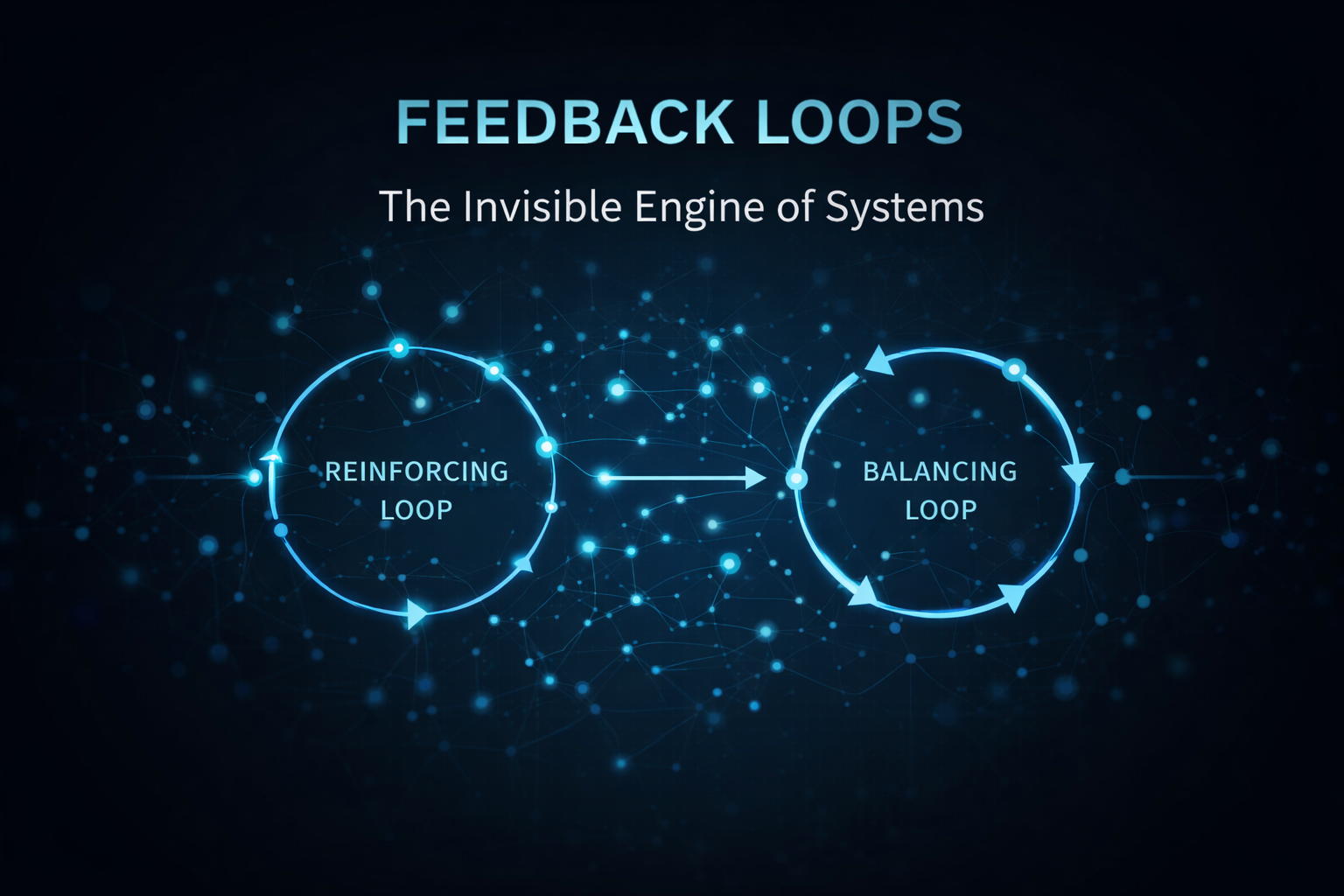

One of the core concepts in systems thinking is the feedback loop.

Feedback loops occur when the output of a system influences its own future behavior. In other words, the consequences of an action do not disappear. They feed back into the system and shape what happens next.

Systems thinking and amplification

Reinforcing loops amplify change. Innovation attracts investment, which accelerates innovation, which attracts even more investment.

Systems thinking and stability

Balancing loops stabilize systems. Supply and demand adjustments help absorb excess movement and restore equilibrium.

These loops create patterns that are often difficult to predict when we focus only on individual events. They are one reason complex systems behave differently from simple mechanical systems.

This is where systems thinking becomes powerful: it teaches us to look for loops, recurring patterns, and system-wide effects rather than one-off explanations.

Another key concept is emergence. Emergent behavior arises when interactions between components create outcomes that were not explicitly designed or centrally planned.

Traffic jams appear without a central controller. Financial bubbles emerge from collective behavior. Social media outrage spreads through network effects. Institutional cultures form without a single author. Market panic can grow from many rational local decisions.

No single actor controls these outcomes, yet they shape entire societies. This is one of the most important lessons of systems thinking: the world is often governed by interaction effects rather than direct command.

Why Systems Thinking Matters for Cybersecurity and Infrastructure

This is where systems thinking becomes operational. Systems thinking is not just abstract theory. It becomes real in cyber risk, infrastructure fragility, identity exposure, and cascading failure across modern institutions.

One reason systems thinking matters so much today is that modern risk rarely emerges from a single isolated failure. In critical infrastructure, cybersecurity, finance, and public governance, failures are often cascading rather than local.

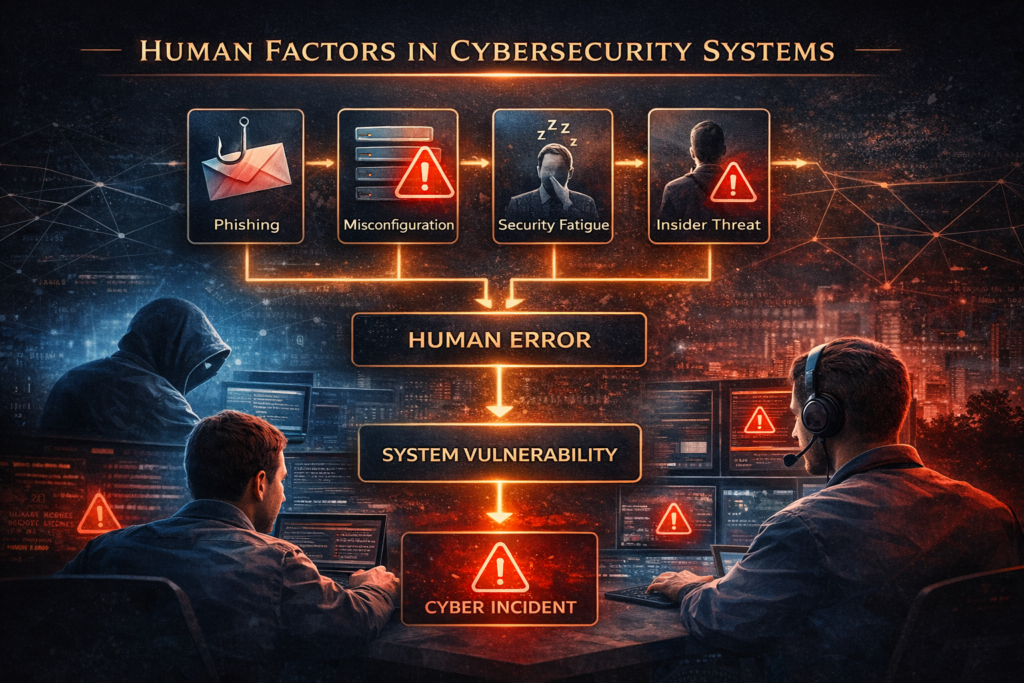

In cybersecurity, an incident is rarely just a technical problem. A phishing email might seem small at first, but its real consequences depend on identity management, employee awareness, access rights, network segmentation, vendor exposure, backup resilience, incident response maturity, and leadership decisions under pressure.

That means a cyberattack is not only about malicious code. It is about the interaction between technology, process, governance, and human behavior. The system determines the severity of the breach.

Systems thinking shows that cyber incidents move through dependencies. They are not isolated technical moments.

In cybersecurity, systems thinking is essential because incidents spread through dependencies, permissions, human behavior, governance weaknesses, and technical architecture at the same time.

The same applies to infrastructure. Energy systems are no longer simple industrial machines operating in isolation. They are embedded in regulatory systems, investment cycles, climate policy, geopolitical dependence, data systems, labor capacity, public trust, and digital control environments.

Take energy grid congestion as an example. It is not caused by one bad decision. It emerges from interacting pressures: electrification, renewable integration, permit delays, physical grid limitations, industrial demand, spatial planning, regulatory frameworks, and long infrastructure lead times. Looking for one single cause misses the real system.

That is why systems thinking is becoming a strategic necessity for risk management. It helps organizations move beyond checkbox compliance and start understanding how vulnerabilities propagate through interconnected structures.

For cybersecurity professionals, policymakers, and infrastructure operators, this shift matters. It means asking not only, “Where is the fault?” but also, “What dependencies made this failure dangerous?”

For more on security, governance, and infrastructure strategy, see our broader work on Cybersecurity & Technology.

Systems Thinking and Global Interconnection

Supply chains, financial markets, communication platforms, and digital infrastructure now operate on a global scale. Events in one region can influence outcomes thousands of kilometers away.

A disruption in semiconductor production can affect the automotive industry worldwide. A conflict near a shipping corridor can reshape prices and delivery schedules far beyond the immediate region. A software vulnerability in one vendor can cascade across thousands of dependent organizations.

Understanding these relationships requires more than event-based analysis. It requires a systemic perspective capable of seeing dependencies, delays, and second-order effects.

Systems Thinking and Technological Acceleration

Artificial intelligence, automation, cloud infrastructure, and digital platforms are transforming industries at extraordinary speed. But technological systems do not operate in isolation. They interact with legal systems, labor markets, public institutions, financial incentives, and cultural norms.

Decisions made in one domain often produce consequences in another. A new AI deployment may affect productivity, privacy, regulatory risk, and social trust all at once. Without systems thinking, it becomes difficult to anticipate these interactions before they become problems.

Systems Thinking and Policy Consequences

Governments increasingly face challenges that cannot be solved with simple interventions. Energy transitions, migration, housing shortages, climate adaptation, public health, and digital sovereignty all involve interacting systems.

Policies designed without systemic awareness often create unintended consequences. A rule that solves one local issue may produce friction elsewhere. A short-term political fix may worsen a long-term structural problem. Systems thinking does not eliminate trade-offs, but it helps make them visible before they become crises.

The Strategic Advantage of Systems Thinking

For individuals, organizations, and institutions, systems thinking provides a major strategic advantage. It encourages long-term thinking, pattern recognition, anticipation of indirect effects, awareness of hidden dependencies, smarter prioritization, and more resilient intervention design.

Instead of reacting only to visible events, systems thinkers analyze the structures that produce those events. This shift, from events to structures, is transformative.

When you understand the structure of a system, you gain insight into where meaningful change can occur. These leverage points are often small interventions that produce disproportionately large outcomes because they affect the logic of the system itself.

The value of systems thinking lies in helping decision-makers move from reactive judgment to structural understanding.

The deepest advantage of systems thinking is not that it predicts everything. It is that it helps us stop being surprised by patterns we should have recognized earlier.

Systems Thinking in Practice

Applying systems thinking does not require advanced mathematics or complex software. It begins with a change in perspective and a better set of questions.

At its core, systems thinking is a practical discipline: it changes the questions we ask before we try to force solutions onto complex environments.

Can You Spot the System?

- What are the visible events?

- What hidden structure keeps producing them?

- Who are the actors in this system?

- Where do delays make the problem harder to see?

- What incentives reinforce the current outcome?

- Which small intervention could change the pattern?

This is how systems thinking starts in practice: not with abstraction for its own sake, but with learning to see the architecture beneath recurring outcomes.

Even a simple system map can reveal insights that linear analysis misses. Over time, this approach develops a deeper understanding of how complex environments behave.

If you are leading a team, studying policy, analyzing infrastructure, researching history, or thinking seriously about cybersecurity, this perspective becomes increasingly valuable. The world rewards people who can see relationships others miss.

Why Systems Thinking Matters in a Complex World

The challenges of the twenty-first century are not simply larger versions of older problems. They are structurally different.

They involve networks rather than simple hierarchies. They evolve faster than traditional institutions. They produce effects that spread across borders, sectors, and disciplines. They are shaped by interactions rather than isolated causes.

To navigate such a world, we need tools that match its complexity. Systems thinking is one of those tools.

It allows us to move beyond fragmented perspectives and see the patterns that shape our collective future. It helps us understand why short-term fixes often fail, why hidden dependencies matter, and why resilience must be designed at the level of structure rather than image.

Understanding systems does not make the world simple. But it makes complexity more intelligible, and that is the first step toward acting wisely within it.

For a foundational introduction to systems thinking, Donella Meadows’ work remains essential, especially Thinking in Systems. For applied cybersecurity guidance in complex environments, resources from NIST and ENISA are also highly valuable.

Conclusion

The goal of systems thinking is not to simplify reality. It is to understand how complexity actually works.

In a world where technology, economies, infrastructure, and societies are increasingly interconnected, the ability to think in systems may become one of the most valuable skills of this century.

That is not because systems thinking gives us total control. It does not. But it gives us something more realistic and more powerful: a better map of the forces we are moving through.

And in a complex world, a better map is often the difference between reacting blindly and acting with intelligence.

If you are building Darja Rihla from the beginning, this article is one of the foundations. It is not only about analysis. It is about learning to see the world as it really behaves.

You can also explore related work on Culture & Identity and the wider logic of structure, history, and modern systems across the platform.