Systems & Strategy · Civilizational Systems Analysis

Trust Is Infrastructure

The hidden operating layer beneath civilization, cybersecurity, and power.

Core thesis: civilization is not only a story of technology, markets, armies, laws, or culture. It is a trust-scaling problem. Every durable system must answer one question: how can strangers coordinate at scale without collapsing into suspicion, fraud, verification overload, or institutional paralysis?

01 · The invisible layer

The world does not run on technology first

People assume modern civilization runs on technology.

It does not.

Technology is only the visible surface layer. Underneath every cloud platform, banking system, institution, trade network, border checkpoint, government database, corporate hierarchy, and digital identity system sits something older and more fundamental: trust.

Not trust as emotion. Not trust as a warm personal feeling. Not trust as a moral slogan placed inside leadership books. Trust as infrastructure. Trust as the hidden operating layer that allows human beings, institutions, machines, and records to coordinate across distance and time.

Most people never notice this layer because functioning systems hide their own coordination costs. A person taps a payment terminal and assumes money moved because software worked. An employee logs into Microsoft 365 and assumes access exists because the password was accepted. A cargo ship enters Rotterdam and unloads containers because global trade appears routine. A citizen crosses a border because a passport scanner flashes green. A customer signs a contract because the legal system behind the signature is assumed to exist.

But underneath each interaction sits a massive invisible architecture of verification, legitimacy, assumptions, permissions, records, institutional memory, legal continuity, and coordinated belief.

Civilization itself depends on strangers behaving as if invisible ledgers are real. Money only functions because populations trust that numerical abstractions stored inside institutional systems will retain meaning tomorrow morning. Legal systems only function because people assume enforcement mechanisms still possess legitimacy. Cloud identity systems only work because authentication chains, certificates, session states, permissions, device posture, and conditional access decisions are continuously validated across infrastructures most users never see.

The modern world feels technological because its trust systems have become abstract. A medieval trader physically saw the guards protecting a city gate. A Roman citizen saw imperial roads, tax collectors, soldiers, and legal officials enforcing the state. A Venetian merchant saw the Rialto, the banker, the ledger, the seal, the contract, and the maritime convoy. Today the infrastructure is hidden behind interfaces. The trust layer became informational.

Yet the underlying problem never changed.

How do human beings coordinate at scale without collapsing into suspicion, fragmentation, fraud, or paralysis?

That question sits underneath empires, cybersecurity, financial systems, bureaucracies, religions, trade routes, digital platforms, AI systems, supply chains, nation-states, and corporations.

Every scalable human system eventually becomes a trust architecture. Every systemic collapse eventually becomes a trust failure.

Trust is not the opposite of infrastructure. Trust is what infrastructure is built to preserve.

02 · Civilization as coordination

Civilization begins where personal trust ends

A small tribe does not require advanced institutional infrastructure because trust remains local. People know each other directly. Reputation is immediate. Betrayal carries visible social consequences. Shared rituals, kinship, religion, language, and geographic proximity create low-cost coordination environments. Trust exists organically because the human field is small enough for memory and reputation to function.

Scale changes everything.

Once systems grow beyond direct human familiarity, trust becomes expensive. A merchant trading across oceans cannot personally verify every sailor, warehouse operator, investor, translator, port authority, tax collector, and regional governor involved in the chain. A government managing millions of citizens cannot rely on personal relationships. A multinational company cannot operate purely through sincerity and memory. An online platform serving billions cannot assume every identity is legitimate. A hospital cannot assume that every login request is safe because it appears to come from a known employee.

Scale destroys intimacy. Distance destroys certainty. Time destroys memory.

Once scale increases, civilizations face a structural problem: verification overhead. How much energy must a system spend confirming legitimacy before coordination becomes too expensive to sustain?

This is where infrastructure emerges. Passports emerge because humans need portable identity verification. Ledgers emerge because memory cannot scale. Contracts emerge because verbal promises fail across distance. Bureaucracies emerge because institutional continuity must outlive individuals. Cybersecurity emerges because digital systems cannot assume legitimacy by default. Archives emerge because power requires memory. Courts emerge because trust needs adjudication when conflict appears. Seals, stamps, signatures, certificates, tokens, and identity providers all solve the same ancient problem in different materials.

The deeper one looks into history, the clearer the pattern becomes: civilization advances by externalizing trust into systems.

That externalization takes many forms: accounting, law, seals, contracts, archives, protocols, cryptography, authentication, compliance frameworks, audit trails, bank reserves, citizenship records, tax systems, religious law, corporate governance, and diplomatic recognition. The visible forms change. The structural function remains the same.

Visible layer

Ports, platforms, passports, courts, banks, clouds, borders, dashboards, offices, markets, armies, and interfaces.

Hidden trust layer

Ledgers, credentials, legitimacy, identity, reputation, certificates, audit trails, rituals, laws, session states, and institutional memory.

Failure mode

Runs, fraud, fragmentation, paralysis, corruption, social panic, identity compromise, legitimacy collapse, and systemic entropy.

This is why high-trust environments move faster. A system with high trust density can coordinate with low friction. Contracts are shorter. Payments settle faster. Rules are obeyed with less enforcement. Leaders require fewer coercive mechanisms. Organizations need fewer defensive procedures. Information moves with less suspicion. The system spends less energy verifying the obvious and more energy producing value.

Low-trust environments behave differently. Every transaction requires proof. Every claim requires verification. Every employee needs monitoring. Every institution needs layers of compliance. Every border becomes harder. Every payment becomes more fragile. Every platform becomes more defensive. Every political statement becomes suspect. The cost of coordination rises until the system becomes trapped inside its own defensive architecture.

Trust reduces friction. Suspicion increases latency.

That is true in a market. It is true in a cloud tenant. It is true in a family business. It is true in a bureaucracy. It is true in an empire.

Darja Rihla can therefore read civilizations not only through monuments and battles, but through their trust architecture. What did a civilization allow strangers to do together? How did it verify identity? How did it preserve memory? How did it punish betrayal? How did it transmit legitimacy? How did it keep ledgers credible? How did it prevent suspicion from becoming more expensive than cooperation?

These questions reveal the infrastructure beneath the story.

03 · Digital trust

Cybersecurity is the governance of digital trust

Cybersecurity is usually described as the protection of systems, networks, identities, devices, and data. That description is correct, but incomplete. At a deeper level, cybersecurity is trust engineering. It is the discipline of deciding which identities, devices, sessions, networks, applications, locations, and behaviors should be trusted under which conditions.

This is why identity has moved to the center of modern security. The perimeter has dissolved. Work is remote. Cloud applications sit outside the old corporate network. Devices move between homes, airports, offices, hotels, and mobile networks. Employees use SaaS platforms, identity providers, APIs, collaboration tools, and third-party integrations. The old castle wall no longer contains the whole system.

In that world, every access request becomes a trust decision.

A password is not enough because passwords can be phished. A device is not enough because devices can be compromised. A location is not enough because attackers can proxy traffic. A session is not enough because session cookies can be stolen. An employee identity is not enough because identity itself can be impersonated. The system must evaluate context continuously.

This is the logic behind Conditional Access. It is not just a technical control. It is an automated trust checkpoint. The system asks: who are you, from where, on what device, with what risk signal, for what application, under what policy, and with what recent behavior?

This is also the logic behind Zero Trust. Zero Trust does not mean trust nothing in a literal philosophical sense. It means do not grant durable implicit trust merely because something is inside a network, known from yesterday, or labeled as internal. Trust must be evaluated, constrained, and renewed.

The historical analogy is clear. A session cookie is a temporary passport. A token is a perishable unit of legitimacy. A certificate is a formalized trust statement. An identity provider is a digital registry of recognition. Multi-factor authentication is a ritual of re-verification. Conditional Access is a gatehouse that changes its answer depending on context.

Cyberattacks exploit this architecture. Many attacks do not begin by breaking mathematics. They begin by forging legitimacy. Adversary-in-the-Middle phishing does not need to destroy the entire system if it can capture a valid session. Token theft does not need to know the user’s password if the token convinces the platform that legitimacy has already been established. Session hijacking is not only a technical exploit. It is a forged passport accepted by the border.

Darja Rihla reframing

Identity attacks are legitimacy attacks. They succeed when the infrastructure cannot distinguish real authority from a captured symbol of authority.

That is why the relationship between cybersecurity and civilization is not metaphorical decoration. It is structural. Both face the same question: how do you coordinate across distance when the visible sign of trust can be forged?

A medieval city needed seals because messengers could lie. A maritime republic needed ledgers because merchants could disappear. A bank needed records because memory could be manipulated. A cloud environment needs conditional verification because a login request may not represent the human it claims to represent.

The medium changes. The problem remains.

This allows Darja Rihla to connect its cybersecurity cluster to its systems and civilization clusters without forcing the connection. The link is natural. Cybersecurity is the modern laboratory where old civilizational trust problems become technical, measurable, automated, and brutally visible.

When a tenant lacks Conditional Access, it resembles a city that trusts every traveler once they know the password to the gate. When an organization ignores session cookies, it misunderstands the real object of trust. When users fall for AiTM phishing, the attacker has not simply tricked a person. The attacker has inserted themselves into a chain of legitimacy and persuaded the infrastructure to accept a false continuity.

That is the heart of modern cyber risk. The machine may function perfectly while the trust layer has already failed.

04 · Historical mirrors

Venice, Carthage, and the VOC were trust machines before they were empires

History often presents maritime powers through ships, ports, weapons, spices, colonies, markets, and wealth. Those visible elements matter, but they are not enough. Ships do not create empire by themselves. Ports do not create confidence by themselves. Trade routes do not maintain themselves through geography alone. The real power of maritime civilizations came from their ability to make strangers coordinate across distance.

Carthage was not only a city of merchants and sailors. It was a coordination system stretched across the western Mediterranean. Its power depended on routes, agreements, naval credibility, colonial links, commercial memory, and repeated trust between distant nodes. The visible layer was maritime movement. The hidden layer was network reliability.

Venice made this pattern even more explicit. The Venetian Republic became a trust machine because it combined maritime power with administrative credibility. The Rialto was not merely a market. It was a place where records, reputation, money, contracts, political authority, and merchant expectation converged. Venetian banking and trade relied on ledgers, state oversight, legal mechanisms, maritime convoys, public debt, and reputational enforcement.

Money could move through entries rather than constant physical transfer. Credit could circulate because records had authority. Merchants could invest in distant ventures because the system created ways to reduce uncertainty. The state itself became part of the trust architecture by protecting routes, enforcing rules, regulating markets, and maintaining institutional continuity.

This is why Venice belongs inside the Darja Rihla framework. Venice was not simply picturesque water, masks, palaces, and trade. It was a historical operating system for scalable trust.

The VOC later expressed a related logic in corporate form. Its ships, forts, uniforms, and routes were the visible layer. The deeper system was legal fiction, chartered authority, accounting, shareholder expectation, bureaucratic continuity, contracts, documentation, and administrative memory. The VOC allowed investors and agents to participate in a system larger than direct personal trust. That was its breakthrough and its danger.

The VOC did not require every participant to know every other participant. It created an institutional machine that could hold capital, contracts, rights, obligations, and expectations across oceans. It was a belief system before it was a company because people had to believe that the legal and administrative framework would still mean something after a ship had crossed the world and returned months or years later.

That belief was not soft. It was operational. It determined whether capital flowed, whether risk could be pooled, whether distant agents could act, whether investors would wait, and whether the organization could survive beyond individual lifespans.

Carthage

Network power through maritime routes, colonial links, commercial memory, and repeated coordination across the Mediterranean.

Venice

Ledger trust, public oversight, state-backed credibility, merchant reputation, and banking infrastructure around the Rialto.

The VOC

Chartered authority, accounting discipline, shareholder belief, contracts, documentation, and administrative continuity.

The comparison with modern platforms is direct. A cloud provider, a payment network, a bank, or an identity provider also depends on invisible trust layers. Users do not inspect every server, certificate chain, policy engine, and database replication process. They trust the platform because institutional signals and technical systems create confidence.

That trust can be earned, abused, automated, monetized, or lost. Historical empires and modern platforms share that vulnerability.

A civilization becomes powerful when it can reduce the cost of coordination. It becomes fragile when the infrastructure that produced that reduction becomes opaque, rigid, corrupt, or detached from legitimacy.

05 · Social cohesion

Ibn Khaldun saw the trust layer before modern systems theory named it

Ibn Khaldun did not write about session tokens, banking protocols, cloud identity, or corporate governance. Yet his insight into asabiyyah belongs at the center of any serious theory of trust infrastructure. Asabiyyah is often translated as social cohesion, group feeling, solidarity, or collective bond. In Darja Rihla terms, it can also be understood as pre-institutional trust density.

Young groups often begin with strong cohesion. They share hardship, memory, obligation, sacrifice, and purpose. The bond is not merely ideological. It is operational. It lowers coordination costs. People act together because they trust the group, recognize its authority, and accept its internal order.

As civilizations become wealthier and more complex, that original trust density often weakens. Institutions grow. Bureaucracies expand. Legal systems become more elaborate. Enforcement becomes more professional. Administration replaces intimacy. Procedure replaces shared instinct. The state, company, or civilization must spend more energy doing what cohesion once did cheaply.

This is not an argument against bureaucracy. Complex systems need administration. But it explains why bureaucracy expands in predictable ways. Some bureaucracy is the memory of civilization. Some bureaucracy is the prosthetic limb of declining trust.

When organic trust is strong, systems can operate with lighter formal control. When organic trust weakens, formal control expands. More forms, more audits, more permissions, more checkpoints, more monitoring, more escalation paths, more compliance rituals, more internal suspicion. The system does not always become more secure. It often becomes more tired.

Bureaucracy is not only organization. In aging systems, bureaucracy can become the visible scar tissue of lost trust.

This is the Khaldunian dimension of modern organizations. A young company with strong mission cohesion may coordinate quickly. People know the direction, trust each other, and act with shared purpose. As it grows, the company requires process, compliance, approvals, reporting layers, and governance. Some of that is necessary. But when process expands faster than legitimacy, the organization enters trust decay.

The same pattern appears inside states. The same pattern appears inside empires. The same pattern appears inside families, movements, religions, platforms, and institutions. Once the invisible bond weakens, visible control multiplies.

Cybersecurity shows the pattern in technical form. A system that can no longer rely on perimeter trust moves toward continuous verification. This is often necessary. But it also reveals a deeper shift: the environment has become too complex and adversarial for implicit trust to survive.

Zero Trust is therefore not only a security architecture. It is a civilizational mood. It is the technical expression of a world where scale, distance, speed, impersonation, and adversarial pressure have made implicit trust dangerous.

Ibn Khaldun helps explain why that shift feels bigger than technology. When trust density falls, systems compensate with verification infrastructure. When legitimacy becomes unstable, systems compensate with control. When cohesion weakens, administration grows. When shared assumptions collapse, every interaction becomes a security question.

This is not nostalgia for small communities or premodern life. It is structural analysis. Large systems cannot return to pure intimacy. They must design trust consciously.

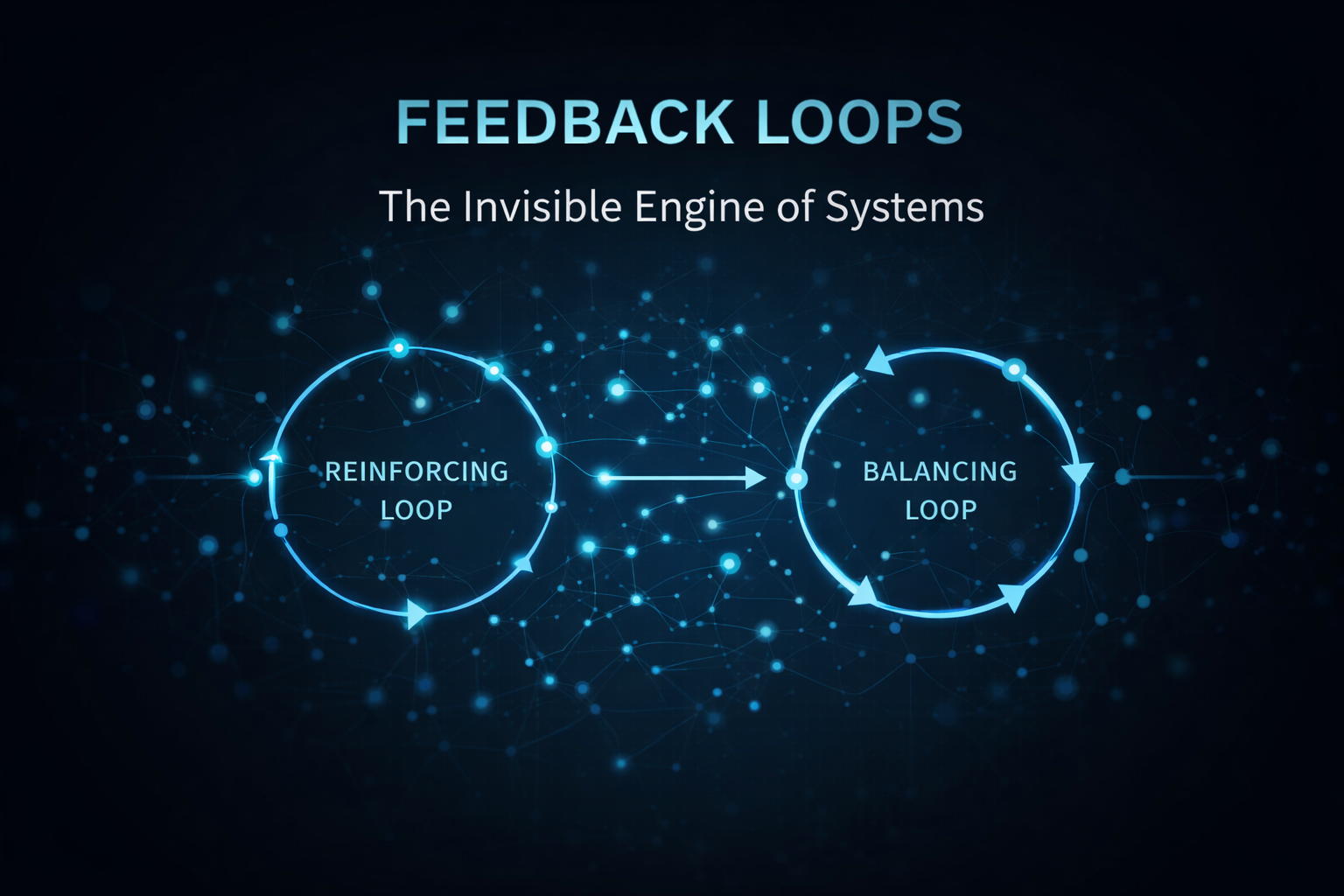

06 · Failure mode

Trust collapse is rarely one event

Trust collapse rarely begins with total destruction. It begins with friction.

People stop believing the numbers. Employees stop believing leadership. Citizens stop believing institutions. Users stop believing platforms. Investors stop believing ledgers. Customers stop believing promises. States stop believing treaties. Teams stop believing each other. Once that happens, the system may still appear intact from the outside, but its coordination layer has already begun to fracture.

Bank runs are trust collapse in financial form. The bank may have buildings, counters, accounts, employees, and systems. But if depositors no longer believe that claims can be honored, the visible institution becomes secondary. The trust layer determines the outcome.

Cyber panic follows a similar logic. An organization may not know whether tokens are compromised, which sessions are valid, which devices are safe, which identities are genuine, or which logs can be trusted. Once uncertainty spreads, normal operations slow down. Access is revoked. Passwords are reset. Sessions are killed. Applications are disabled. Meetings multiply. Every interaction becomes suspect.

Political polarization can also be read as trust decay. A society loses shared assumptions about evidence, authority, fairness, memory, media, law, and identity. When the interpretive layer fractures, every institution becomes contested. The system no longer disagrees only about policy. It disagrees about which reality is legitimate.

Corporate decay follows the same logic. A company loses trust between teams, leadership, employees, customers, and systems. Work still happens, but coordination becomes defensive. People document more than they decide. They copy more people on email. They avoid ownership. They protect themselves from blame. The organization becomes slower not because people suddenly became less intelligent, but because trust latency increased.

In civilizational terms, trust collapse produces entropy. Entropy does not always mean sudden collapse. It can mean rising disorder, rising overhead, declining coherence, and increasing energy required to maintain the same output.

Diagnostic principle

When a system spends more energy proving legitimacy than producing value, its trust infrastructure is under strain.

This is where Darja Rihla’s systems thinking cluster becomes essential. Trust decay often behaves like a feedback loop. Low trust creates more controls. More controls create more friction. More friction creates more frustration. More frustration creates more workarounds. More workarounds create more risk. More risk creates more controls. The system locks itself inside a defensive spiral.

This does not mean controls are bad. Controls are necessary. The question is whether controls are restoring trust or merely compensating for its absence. A healthy trust architecture verifies what must be verified while preserving the ability to move. An unhealthy trust architecture turns every action into a checkpoint and every participant into a suspect.

The art of durable systems is not maximum trust or maximum control. It is calibrated trust.

Too much trust becomes naivety. Too much control becomes suffocation. Strong systems design verification in a way that protects coordination rather than destroying it.

07 · The civilizational shift

From default trust to continuous verification

The modern world is moving from implicit trust environments toward explicit verification environments.

Premodern societies relied heavily on proximity, kinship, guilds, religion, local reputation, shared ritual, and face-to-face recognition. Trust was local, embodied, and socially enforced. The weakness of those systems was scale. They struggled when coordination had to cross unfamiliar boundaries.

Modern systems solved scale through abstraction. Money became numbers. identity became documents. authority became records. trade became contracts. communication became networks. memory became databases. legitimacy became institutional. This allowed coordination to expand far beyond direct human familiarity.

Digital systems intensified the abstraction. A person can now access an enterprise environment from another continent. A transaction can settle without physical presence. A platform can host billions of identities. An attacker can imitate legitimacy through a browser session. AI systems can produce convincing language at scale. The visible sign of authenticity is easier to simulate than ever.

This creates the Zero Trust condition. The system cannot safely assume that a familiar signal is genuine. It must verify context, behavior, device health, risk, identity, and session integrity continuously.

The philosophical shift is enormous. Traditional social trust often began from recognition: you are part of us, therefore we trust you. Modern digital trust increasingly begins from verification: prove that this request should be allowed under current conditions.

That shift is not limited to cybersecurity. It appears in finance through fraud detection and transaction monitoring. It appears in borders through biometric passports. It appears in media through source verification. It appears in supply chains through provenance tracking. It appears in institutions through audit trails. It appears in AI through questions of authenticity, model integrity, and generated content. It appears in politics through disputes over legitimacy and information.

We are building verification societies because the cost of impersonation has fallen.

This is the deep link between AI, cybersecurity, institutional theory, and civilization. As systems become more powerful and more abstract, trust must become more explicit. The hidden layer must be designed rather than assumed.

But there is a danger. A civilization that solves every trust problem through surveillance, control, and verification can become efficient but spiritually brittle. It may protect transactions while losing sincerity. It may secure identities while weakening social bonds. It may reduce fraud while increasing alienation. It may authenticate every action and still fail to produce meaning.

That is why the philosophy cluster matters. Trust is not only a technical problem. It is also a moral and civilizational problem. A society cannot survive on verification alone. It also needs legitimacy, sincerity, shared purpose, restraint, and forms of trust that cannot be fully automated.

The future will belong to systems that understand both sides: trust as infrastructure and trust as moral ecology.

Digital systems need Zero Trust because impersonation is cheap. Human societies still need earned trust because meaning cannot be reduced to access control.

08 · Diagnostic engine

How to read any system through its trust infrastructure

The purpose of this article is not only to make a philosophical claim. It is to introduce a Darja Rihla method. Instead of asking only what a system looks like, ask what trust problem it is solving.

1 · Identity

Who is allowed to act?

Look for passports, accounts, roles, citizenship, tokens, seals, credentials, membership, and recognition systems.

2 · Memory

What records are trusted?

Look for ledgers, archives, logs, contracts, sacred texts, databases, audit trails, and institutional memory.

3 · Legitimacy

Why do people obey?

Look for law, religion, authority, consent, fear, competence, ritual, reputation, and shared belief.

4 · Verification

How is trust checked?

Look for audits, MFA, signatures, witnesses, courts, certificates, inspections, monitoring, and policy engines.

5 · Failure

What happens when trust breaks?

Look for runs, breaches, revolt, fraud, corruption, paralysis, fragmentation, misinformation, and institutional fatigue.

6 · Repair

How is trust restored?

Look for reform, transparency, punishment, re-authentication, leadership change, debt restructuring, renewed ritual, and improved architecture.

This framework can be applied to a state, a startup, a family business, a mosque community, a university, a cloud tenant, a bank, a maritime republic, a social platform, or an empire. The visible forms differ, but the diagnostic questions remain stable.

Who is trusted? Who verifies? What is recorded? What can be forged? What happens when memory fails? Where does legitimacy come from? How expensive has cooperation become? Does the system require more control because trust is low, or does it use control wisely to protect trust?

These questions convert history into systems analysis and cybersecurity into civilizational theory.

09 · Reading routes

Where this article connects inside Darja Rihla

Cybersecurity & Tech

This post connects directly to identity, session hijacking, AiTM phishing, and Conditional Access as digital trust infrastructure.

Systems & Strategy

This is the primary cluster. Trust behaves like a hidden system with feedback loops, emergence, friction, and entropy.

Culture & Identity

Carthage, Venice, and maritime systems become case studies in distributed trust and network power.

Philosophy & Legacy

Sincerity, legitimacy, cohesion, and moral infrastructure prevent this from becoming only a technical article.

10 · FAQ

FAQ

It means trust is not only a feeling between people. In scalable systems, trust becomes embedded in ledgers, laws, credentials, institutions, protocols, identity systems, audit trails, and verification processes.

The article is primarily about coordination, verification, legitimacy, feedback loops, and systemic fragility. Philosophy and history enrich the argument, but the core frame is structural.

Cybersecurity makes old trust problems visible in technical form. Identity, access, session state, phishing, tokens, and Conditional Access are all mechanisms for deciding who should be trusted under changing conditions.

Technically, Zero Trust is a security architecture. Conceptually, it reflects a broader shift from implicit trust toward continuous verification in complex, digital, adversarial environments.

They show that trade and power depend on more than ships and wealth. They depend on trust architectures: ledgers, contracts, reputation, routes, legal authority, and institutional memory.

11 · Sources and internal foundations

Sources to add or verify before publishing

- NIST Special Publication 800-207: Zero Trust Architecture.

- Microsoft Learn documentation on Conditional Access and identity protection.

- Research on Adversary-in-the-Middle phishing and session token theft.

- Historical research on Venetian banking, Banco di Rialto, Banco del Giro, and Rialto ledger systems.

- Historical research on the VOC, joint-stock governance, charters, accounting, and maritime administration.

- Ibn Khaldun, The Muqaddimah, especially the concept of asabiyyah and dynastic decay.

- Darja Rihla internal articles on complex systems, feedback loops, cybersecurity, Carthage, and philosophy.

Practical application

Protect the trust layer of your WordPress website

A business website depends on trusted access, maintained plugins, and reliable security controls. The WordPress Security Quick Check identifies preventable risks and gives you clear priority actions.

Request a WordPress Security Quick Check